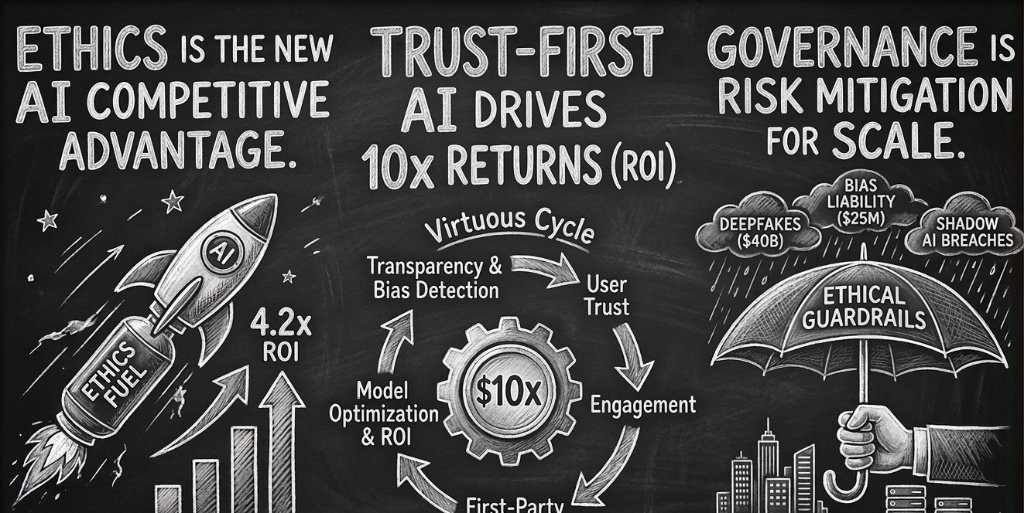

Ethics Isn't a Constraint—

It's Your New Competitive Advantage

Discover why ethical AI is the ultimate risk mitigation strategy in 2025–2026. Data reveals that trust-first AI drives 4.2x ROI while unmanaged systems cost millions.

The widespread institutionalization of artificial intelligence has reached a critical inflection point where the ability to govern a model is now more valuable than the model itself. In the initial surge of 2023 and 2024, organizations prioritized rapid deployment and experimental pilots, often relegating "Responsible AI" to the periphery of legal compliance or public relations.

As of late 2025—and looking ahead into 2026—the strategic landscape has shifted toward a "Trust-First" paradigm, where ethical guardrails are recognized not as inhibitors of speed, but as the essential infrastructure for sustainable scale. Research from the Stanford 2025 AI Index reveals that while 78% of organizations have integrated AI into at least one business function, the financial delta between "AI Leaders" and "Laggards" is increasingly defined by the maturity of their ethical frameworks.

"When an organization prioritizes transparency and bias detection, it fosters deep-seated trust with its users—creating a self-reinforcing loop of value that competitors with opaque systems cannot replicate."

The economic imperative for this shift is grounded in the reality of the "Virtuous Cycle": transparency and bias detection foster trust → trust drives engagement → engagement generates superior first-party data → data improves AI accuracy. In an era where a single deepfake incident can cost an enterprise over $500,000 and biased algorithms can lead to $25 million in liability, ethics has transitioned from a moral consideration to a primary risk mitigation strategy.

Responsible AI Drives Valuations

The economic justification for ethical AI rests on the tangible reduction of risk-weighted capital requirements and the acceleration of enterprise value. Global private investment in AI reached a staggering $252.3 billion in 2024, with generative AI attracting $33.9 billion—an 18.7% increase year-over-year. Yet ROI remains concentrated among the 6% of organizations that qualify as "AI High Performers."

These high performers attribute more than 10% of their EBIT directly to AI. They follow the 10-20-70 principle:

This statistical divergence suggests that the "AI Boom" is fundamentally different from the "Internet Boom." While the internet rewarded rapid scale, the AI era rewards trusted scale. Organizations are shifting technology budgets—projected to rise from 8% of revenue in 2024 to 14% in 2025—toward unified AI platforms that offer built-in governance, security, and explainability.

Defending Against Deepfakes & Failures

Ethical AI serves as the primary defense mechanism against the rising tide of generative fraud and autonomous liability.

The phenomenon of "Shadow AI"—where employees use unauthorized AI tools to handle sensitive data—has become a massive security vulnerability. Organizations that utilize AI extensively within their security operations shortened breach containment times by 80 days and lowered average costs by $1.9 million.

The Hardening Legal Framework

Landmark cases in 2024–2025, such as Air Canada v. Moffatt, established that a company is legally responsible for "hallucinations" or incorrect advice provided by its chatbot. The "Reasonable Professional" standard now includes a technology competence requirement: if your AI gets it wrong, you are liable, not the vendor.

The Pillars of Algorithm Trust

Transparency is the foundational layer of any AI strategy intended to survive the "reputation divide" of 2026. In fact, 48% of CX leaders identify a lack of transparency as their single biggest risk in AI-driven customer experience.

Mechanisms of Enterprise Transparency

- →Model Cards: Communicate a model's approach, performance, and limitations.

- →Explainable AI (XAI): Move from "AI-washing" to "AI-due-diligence."

- →Retrieval-Augmented Generation (RAG): Ensure outputs are connected to verifiable sources.

Bias detection is equally critical. In 2025–2026, bias is recognized as a structural risk that can lead to systemic discrimination. New laws such as New York's Local Law 144 prohibit the use of automated employment decision tools unless they pass a bias audit.

The High-Stakes Safety Net

The third pillar of the Trust-First strategy ensures that high-stakes decisions always have a human safety net. This is particularly critical in healthcare and finance, where "unsupervised AI" can lead to catastrophic failure.

Developed an AI tool for malnutrition detection generating $20M in revenue impact by identifying high-risk patients for clinical nutrition teams.

Frontier models achieve 80% accuracy (surpassing a human associate's 71%), yet "AI verification isn't optional—it's a professional obligation."

Service reps using "Agent Assist" tools handle 80% of queries with minimal input, while humans remain the "system of action."

How Ethics Drives Data and Engagement

The core thesis of a Trust-First AI strategy is that ethics is the engine of a virtuous cycle, not the brake.

Trust Creation

Transparency and bias detection build confidence. 68% of consumers are more likely to trust AI with human-like empathy.

Higher Engagement

When users trust an AI system, they use it more deeply. Conversational interactions reached 26B for Bank of America.

Superior Data Acquisition

Engagement provides 'first-party data'—clean, consented, and contextually relevant for privacy compliance.

Model Optimization

Better data allows precise tuning and grounding, reducing hallucinations and improving output accuracy.

Competitive Advantage

The resulting system is more personalized, building more trust—restarting the cycle.

The Compliance Paradox

In 2025–2026, the global regulatory landscape is a patchwork of conflicting mandates.

| Jurisdiction | Framework | Direction | Key Focus |

|---|---|---|---|

| European Union | EU AI Act (2024) | Stricter accountability | Risk-based bans; pre-market testing |

| United States (Fed) | Dec 2025 Exec Order | Deregulation focus | Centralized policy; challenges state laws |

| US States (CA, NY) | Local Laws | Stricter accountability | Bias audits; expanded liability |

This creates a "compliance paradox": U.S. federal policy aims to centralize and deregulate, while international standards and U.S. states move toward stricter accountability. The strategic path forward is to adopt a "Global Ethical Baseline"—implementing the highest standards of transparency and fairness across all jurisdictions to ensure future-proofed, trust-first brand identity.

Finance, Healthcare, and Marketing

Banking and Finance: The Second-Largest AI Spender

The banking industry is projected to invest $31.3 billion in AI by the end of 2025. High-performing banks use AI for Lead Scoring and Churn Prediction while maintaining a unified data architecture for accuracy. For example, Lloyds Banking Group utilized Trustwise's "Optimize:ai" to reduce generative AI operational costs and carbon footprints while ensuring compliance with internal policies and external regulations.

Healthcare: Beyond Documentation to Clinical ROI

Healthcare AI adoption is occurring at 2.2 times the rate of the broader economy. The focus has shifted from "what GenAI can do" to "what GenAI must do" to improve patient outcomes. Clinicians report a 77% effort reduction in charting through ambient listening tools. The most compelling clinical ROI includes:

- Malnutrition detection programs generating $20M in revenue impact

- Stroke response optimization saving $70K–$120K per patient

- Aggregate impact of 700 lives saved and $100M in documented healthcare ROI in 2025

Marketing and SEO: The New GEO Standard

98% of marketing leaders now leverage AI for customer engagement. In 2025–2026, the focus is on "Generative Engine Optimisation" (GEO)—structuring content to be accurately retrieved and cited by AI search engines. Since AI Overviews (AIO) now appear for 55% of searches and reduce organic clicks by 35%, competitive advantage belongs to those who create "AIO-ready" content: fact-dense, structured, and highly authoritative.

Quantifying the ROI of Ethics

| Strategy Component | Impact on ROI / Cost | Key Statistic |

|---|---|---|

| Extensive AI Security | −$1.9 Million (Breach Cost) | 80 days faster containment |

| Trust-First CX | +75% Engagement | Abandonment drops to 25% |

| Bias Mitigation | +22.6% Productivity | Early adopters gain advantage |

| Responsible Scaling | 10.3× Return | Top 6% of performers |

| Shadow AI Governance | −$670,000 (Risk Reduction) | Per potential breach avoided |

The Risk-Adjusted ROI (Ethical AI Value) Formula

Variable Definitions

| Variable | Name | Description |

|---|---|---|

E_p | Productivity Gains | Efficiency boosts, time saved, and automated labor. |

R_g | Revenue Growth | New market share, higher LTV (Life-Time Value) from user trust. |

C_o | Operational Costs | Compute, tokens, talent, and maintenance. |

P_f | Probability of Failure | The % chance of a hallucination, breach, or bias event. |

L_c | Liability Costs | Fines, legal fees, and "Brand Erosion" costs (the $40B mentioned). |

I_i | Initial Investment | The R&D and implementation capital. |

Addressing the Speed-Governance Trade-off

Critics argue that strict ethical governance slows down innovation, allowing faster, less-regulated competitors to capture market share. This is the "Governance Paradox." However, data from 2024–2025, with implications through 2026, suggests that speed without governance is a "false velocity."

43% of business leaders acknowledged that their 2024 AI initiatives fell short of expectations due to misaligned objectives, integration drag, and "hallucination risk."

"Moving fast only works if you are moving in the right direction. An unmanaged AI agent can generate 28.87 touchpoints for a single purchase—but if those interactions are biased or inaccurate, it will permanently alienate the customer."

Furthermore, with the rise of "AI scoring" and standardized benchmarks (HELM Safety, AIR-Bench), investors are now explicitly looking for real, scalable AI capabilities over marketing hype. The "governance lag" is a necessary investment in the stability of the model's future performance.

For the Strategic Leader

Conduct Regular Ethical Audits

Use tools like Trustwise or IBM Watsonx to review AI systems for bias, drift, and unintended consequences on an ongoing basis.

Anchor AI in First-Party Data

Ground your models in your organization's own data via RAG to reduce hallucinations and ensure 'on-brand' responses.

Implement the 10-20-70 Principle

Allocate 70% of your AI budget to people, process, and cultural transformation. Employee-centric organizations are 7× more likely to succeed.

Formalize AI Operating Models

Move from siloed pilots to centralized AI platforms that ensure consistency, scalability, and governance across the enterprise.

Generative Engine Optimized Insights

Conclusion: Winning the Right Way

The shift from 2023's "unbridled excitement" to 2025–2026's "nuanced evaluation" marks the beginning of the mature AI economy. In this new landscape, ethics is the primary engine of growth.

By prioritizing transparency, bias detection, and human oversight, you aren't just complying with the law—you are building a resilient, high-performance organization that users can trust. True champions don't just win; they win by adhering to ethical standards that ensure their data is cleaner, their engagement is deeper, and their ROI is 10× higher than the competition.

"Stop treating ethics as a brake. Start using it as the throttle."

Audit Your AI Roadmap Today

Ensure your strategy follows the 10-20-70 principle and anchors its value in the Virtuous Cycle of trust and first-party data.

Get in Touch